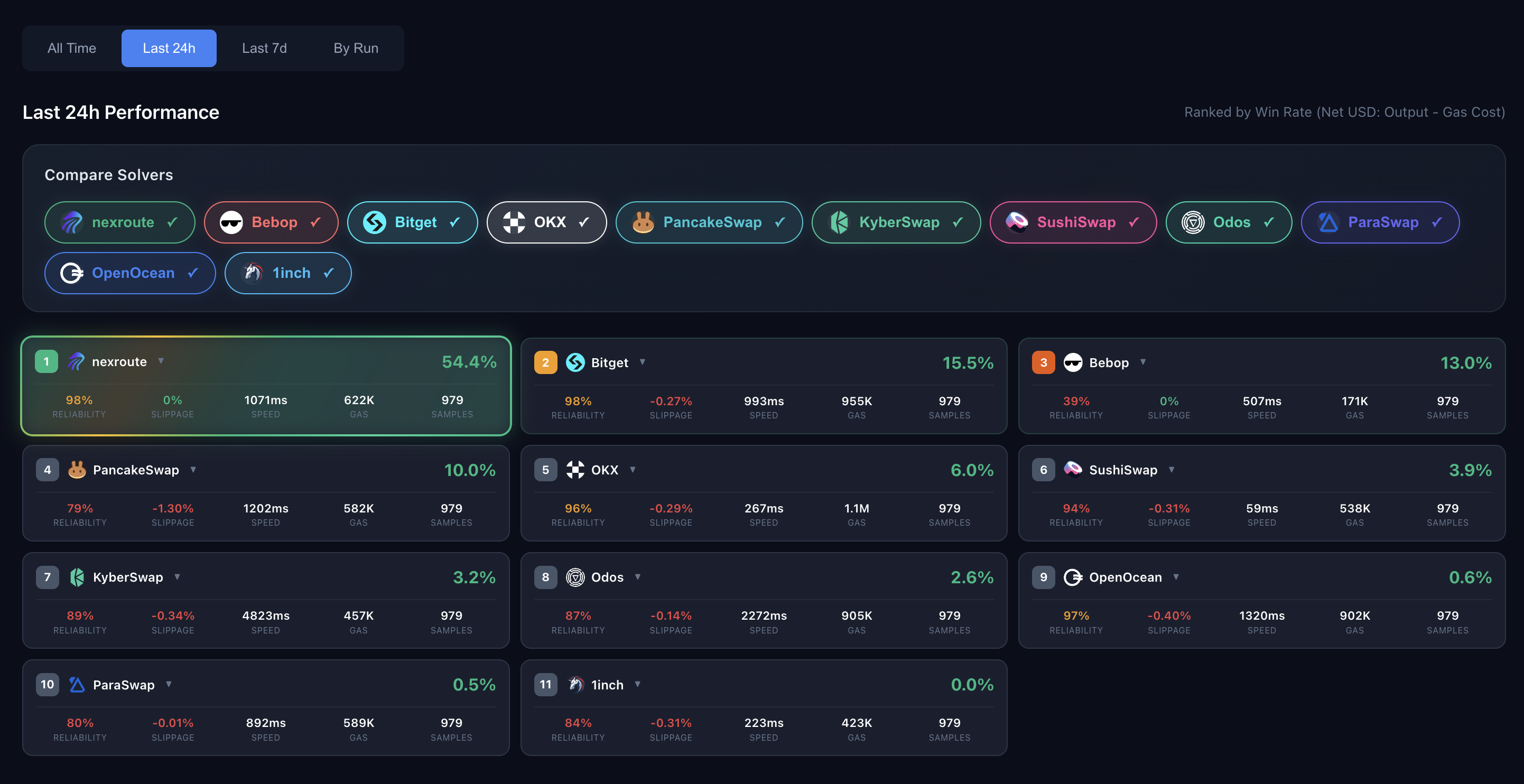

Benchmark Platform

We maintain a private benchmark platform at benchmark.nexroute.io that continuously tests nexroute against major aggregators. Access to the platform is shared on a case-by-case basis with prospective partners and integrators.

To request access to the benchmark platform, please contact your nexroute business development representative or request API access.

Aggregators Tested

We benchmark against the following swap aggregators:- 1inch

- 0x

- Bebop

- Bitget

- KyberSwap

- LiFi

- OKX

- Odos

- OpenOcean

- ParaSwap

- PancakeSwap

- SushiSwap

- Transit

Key Metrics

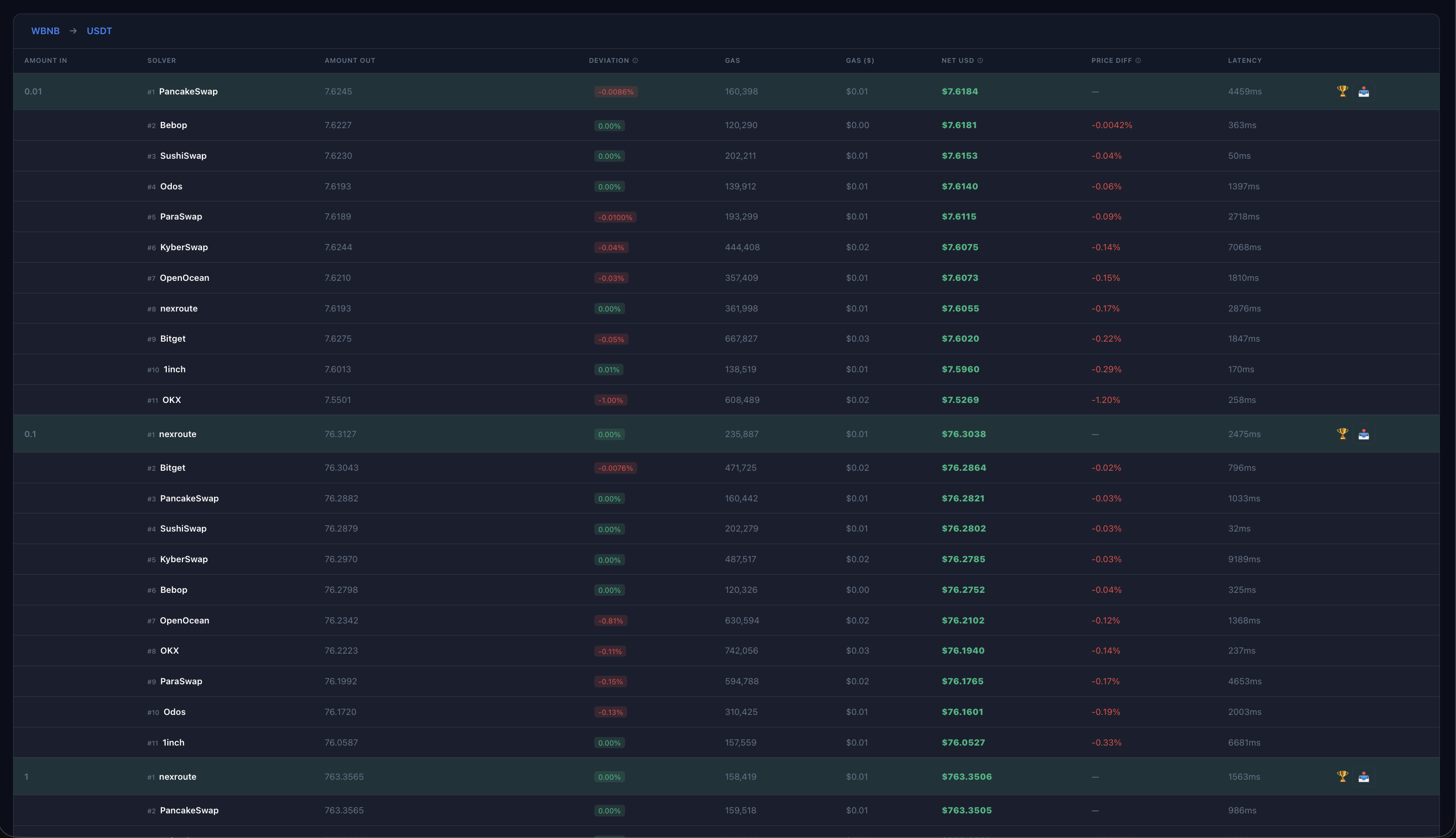

Net USD (Primary Metric)

Output token amount in USD minus gas costs. The most important metric for evaluating overall value delivered to users.Output Amount

Raw token output before gas costs.Quote Latency

API response time in milliseconds.Success Rate

Percentage of successful responses versus errors or timeouts.Gas Cost

Estimated transaction cost. Directly impacts Net USD.Methodology

Testing Infrastructure

Our benchmark runs on a custom BSC geth node with specialized benchmarking capabilities.Benchmark Process

Every 30 minutes, a predefined list of trades is executed through the benchmark node. Each trade specifies an input token, output token, and input amount. When the benchmark node receives a trade, the following process occurs:-

Simultaneous Quote Requests: The node fires quote requests to all solver APIs concurrently for the given trade. Each request specifies:

- Zero custom fees (when applicable by the solver)

- Maximum slippage tolerance

- Identical trade parameters (tokens, amount)

- State Cloning: As soon as all quote requests are dispatched, the benchmark node clones the current blockchain state. This becomes the reference state for all simulations.

-

Fair Execution Simulation: Each solver’s transaction data is executed against a fresh copy of the reference state, regardless of the solver API’s response time. This ensures:

- All solvers are tested against identical market conditions

- Execution outcome reflects the moment the user would have requested the quote

- No solver gains an advantage from slower API response times

- Data Persistence: Trade details, metrics, transaction data, and execution outcomes are stored in a database for analysis.

Blockchain Reorg Handling: If the blockchain state becomes stale during a benchmark simulation (e.g., due to a blockchain reorg), the corresponding trade is discarded for all solvers to maintain fairness.

Metrics Tracked

For each solver and trade, the benchmark captures comprehensive metrics:| Metric | Description |

|---|---|

| Request Time | Unix timestamp when quote was requested |

| Request Block | Block number when quote was requested |

| Response Time | Unix timestamp when quote was received |

| Response Block | Block number when quote was received |

| Advertised Amount Out | Output amount returned by the solver’s API quote |

| Advertised Gas | Gas estimate provided by the solver’s API |

| Actual Amount Out | Real output amount after simulating the transaction on-chain |

| Actual Gas | Real gas consumed during simulation |

| Quote Error | Any errors returned by the solver’s API |

| Simulation Error | Any errors during on-chain execution simulation |

| Token Pricing | ETH and USD prices for input/output tokens at execution time |

Fairness Measures

- ✅ All solvers execute against identical blockchain state snapshots

- ✅ Concurrent API requests eliminate timing bias

- ✅ Zero custom fees and max slippage for best-case testing

- ✅ Public APIs only - no special access

- ✅ Transparent error tracking for API and simulation failures

- ✅ Trades discarded for all solvers during blockchain reorgs

Data Availability

The benchmark website computes aggregated results and provides:- Performance comparisons across all solvers

- Historical trend analysis

- Downloadable result data for verification and independent analysis

Running Your Own Tests

Running Tests

Step-by-step guide with code examples for reproducing benchmarks